Agent Framework Overview

Agents & Popular Frameworks Overview

"Agents aren't special — the ones that can think, act, and reflect on their own are." But when it comes to real-world deployment, the common questions are: how do you choose between Workflows and Agents? Which frameworks fit which scenarios? This page breaks down the key decisions and gives you a quick-reference comparison of the major frameworks.

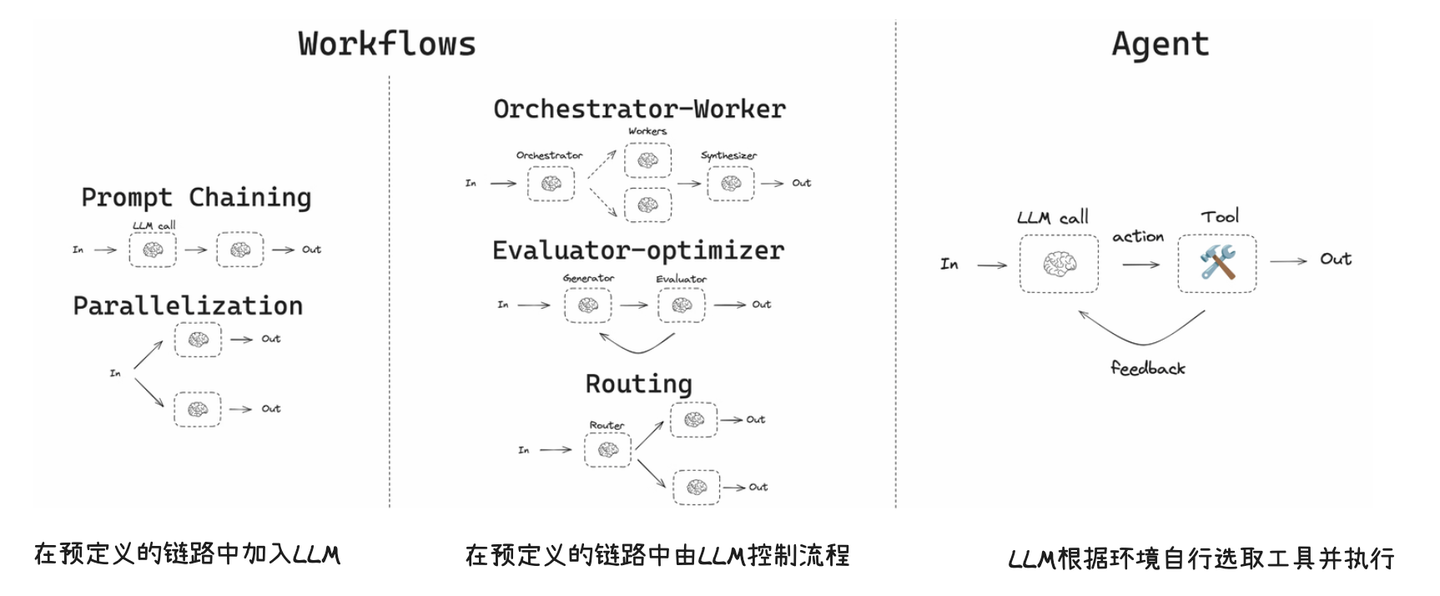

1. The Difference Between Workflows and Agents

First things first: not every scenario needs an Agent.

- Workflow: Steps are fixed, branches are limited, and you can enumerate all paths ahead of time. Something like "user submits form -> validate -> generate report -> send email" — every step is predetermined.

- Agent: Requires clarification during conversation, dynamic decision-making, cross-system coordination, and paths that can't be fully predicted. Something like "check this customer's refund status" — which system to query, whether you need extra info, and how to handle the result all require the AI to figure out on its own.

Our experience from real projects: 80% of scenarios work fine with Workflows. Only the ones where paths are genuinely unpredictable actually need Agents. Many teams jump straight to Agents, then discover they're hard to debug, expensive to run, and tough to control — and end up going back to Workflows anyway because they're more stable.

The decision criteria are simple:

- Fixed steps -> Workflows are more stable, cheaper, and easier to control.

- Long-tail, variable paths -> Agents handle the "ask a question -> look something up -> then decide" flow better.

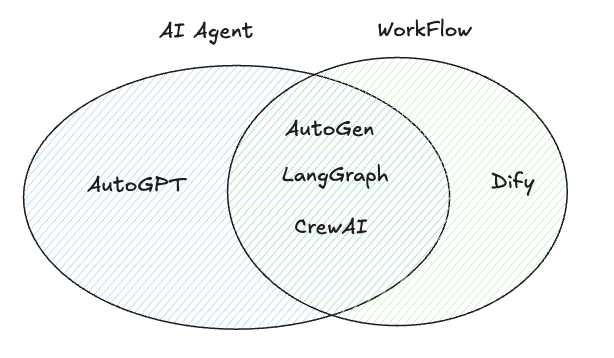

2. Framework Selection (Based on Popularity & Ecosystem)

Based on community traction and ecosystem maturity, here are the 5 common frameworks:

| Framework | Ecosystem Traits | Best For |

|---|---|---|

| AutoGPT | High autonomy, rich tooling | General-purpose task automation |

| LangGraph | Graph structure, controllable | Step-by-step process tasks |

| Dify | Low-code, platform-style | Medium-complexity business apps |

| CrewAI | Multi-agent orchestration | Role-based collaboration |

| AutoGen | Multi-agent conversation | Multi-role collaboration & observability |

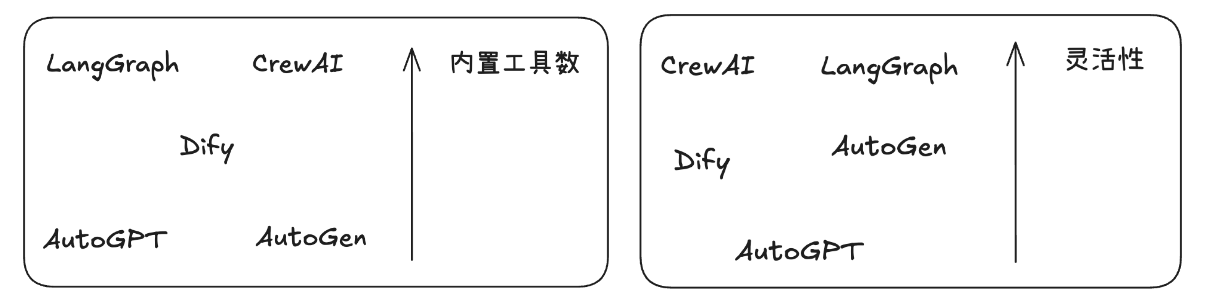

3. Framework Comparison Summary

This comparison table is based on our team's actual experience using these frameworks in real projects — it's not just a documentation summary:

| Framework | Best For | Strengths | Weaknesses | Our Recommendation |

|---|---|---|---|---|

| AutoGPT | Complex general tasks | High autonomy, task decomposition, rich tools | Expensive, less controllable | Good for exploration and prototyping, not recommended for production |

| LangGraph | Decomposable processes | Observable, debuggable, controllable | Limited autonomy | Top pick for production — controllability and debuggability are must-haves |

| Dify | Medium-complexity apps | Low barrier, quick to start | Broad but not deep | Great for MVP validation, especially when the team doesn't have dedicated AI engineers |

| CrewAI | Role-based collaboration | Strong tool ecosystem, flexible | Some capabilities need filling in | Worth trying for multi-agent collaboration scenarios |

| AutoGen | Multi-agent conversation | Native multi-agent support | Ecosystem still growing | Backed by Microsoft — bullish long-term, but docs and examples are limited right now |

4. When Should You Use an Agent?

An Agent is usually the better fit when:

- The problem can't be fully enumerated and paths are uncertain.

- You need to query and combine data across multiple systems dynamically.

- The conversation requires clarification, negotiation, and decision-making.

And the flip side: if you can draw a complete flowchart where every branch is accounted for — just use a Workflow. Don't over-engineer with Agents.

5. Real-World Scenario: Branch Explosion in Customer Service

This is an actual situation we ran into on an e-commerce project, and it's quite representative.

Workflows hit "branch explosion" with long-tail problems: a single "my package hasn't arrived" case might require combining logistics status, policy windows, user tier, address issues, and promo rules. A fixed workflow becomes complex and unmaintainable. We initially built this with a rules engine — 200+ rules, and every time we changed one we worried about breaking the others.

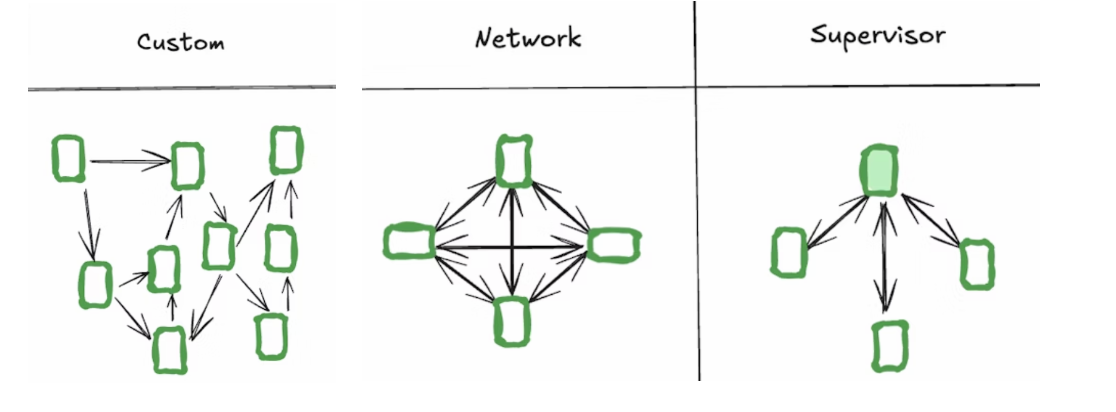

We eventually switched to an Agent pattern, splitting responsibilities like a team:

- Planner decomposes intent and clarifies ("Are you checking shipping or requesting a refund?")

- Tool Agent queries logistics/payment/CRM (each calling its own APIs)

- Policy Agent reasons about compliance policies ("Is this order within the 7-day no-questions-asked return window?")

- Execution Agent operates on tickets and closes the loop

Results after the switch: rules maintenance cost dropped 70%, long-tail issue resolution went from 45% to 82%. But API call costs went up about 3x — that trade-off depends on your business value calculation.

6. Framework Quick Reference

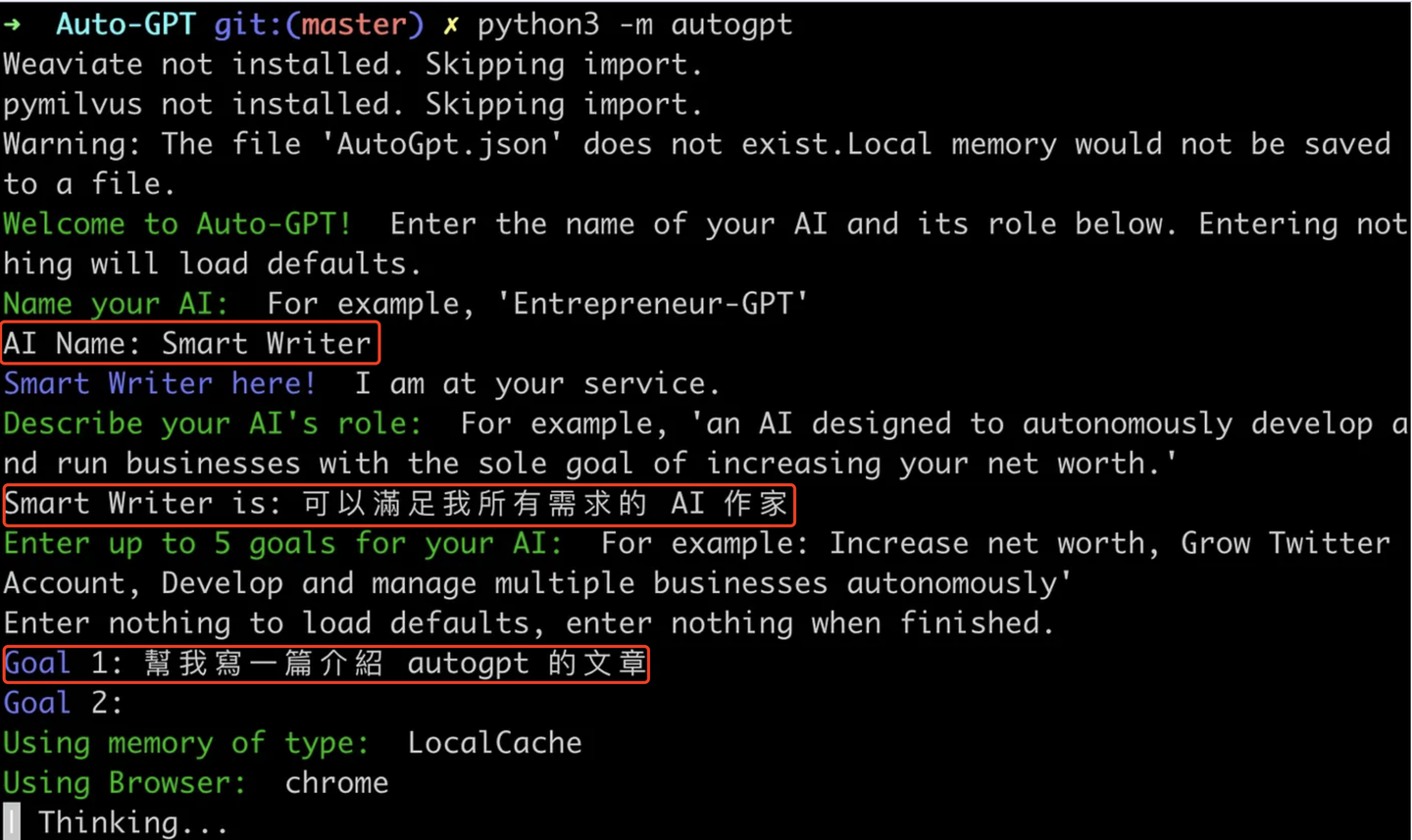

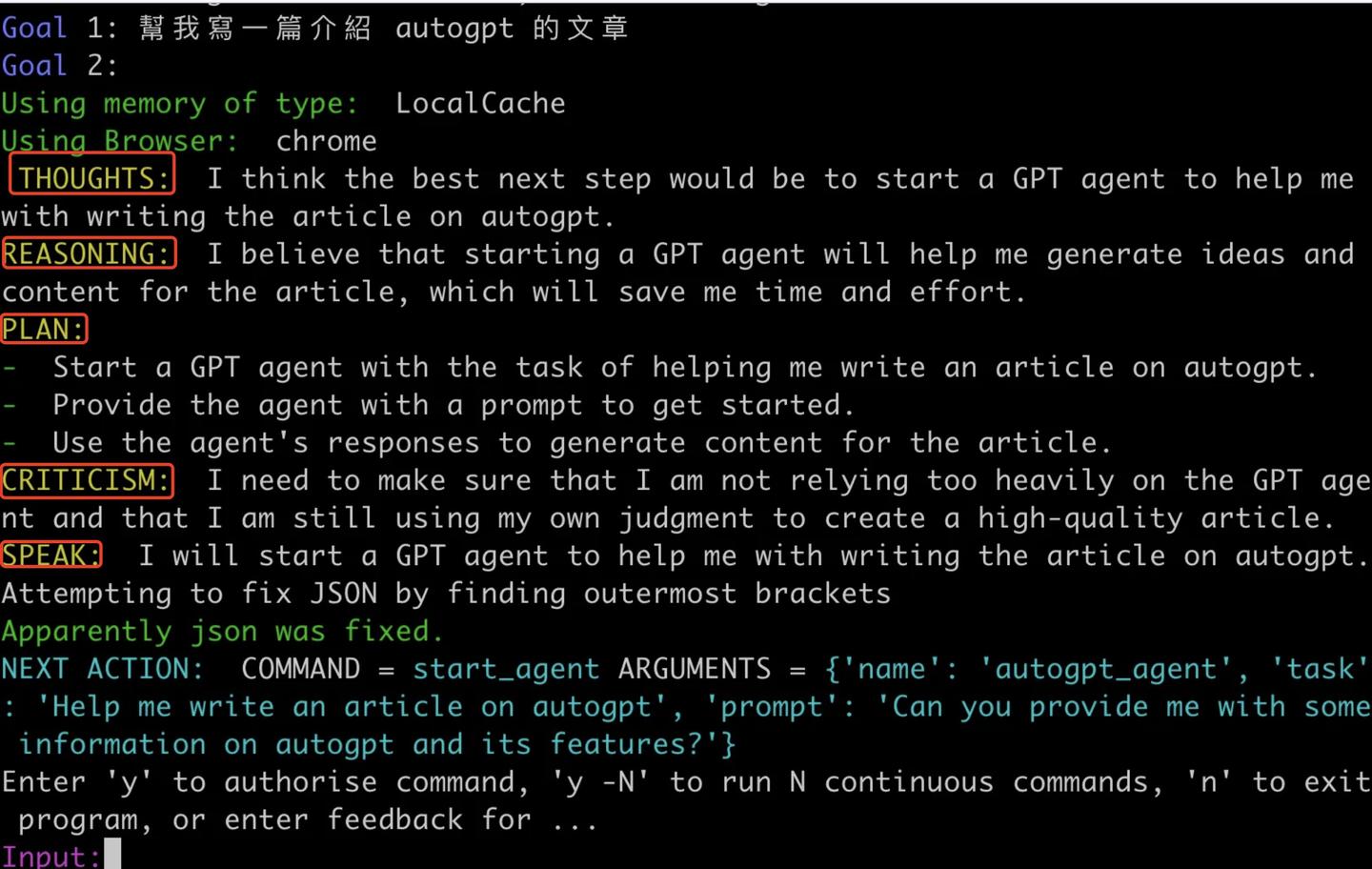

6.1 AutoGPT

Positioning: High-autonomy Agent framework, strong at task decomposition and multi-step execution.

Strengths:

- Goal-driven, automatic subtask decomposition

- Rich tool interfaces, good for complex chain-of-tasks

Weaknesses:

- Longer tasks tend to drift from context — we tested a 10-step task where by step 7 the model had "forgotten" the original goal

- Cost and execution efficiency need careful management, a single complex task can easily burn 10K+ tokens

6.2 LangGraph

Positioning: Graph-structure orchestration framework, emphasizing controllable flow and observability.

Strengths:

- Clear structure, controllable process — what each node does and how transitions work is explicit

- Easy to debug, supports persistent state — when production issues come up you can quickly pinpoint which node broke

Weaknesses:

- Limited autonomy — if your scenario needs the Agent to decide its own next step, LangGraph requires extra design work

- Pre-built patterns have limited flexibility

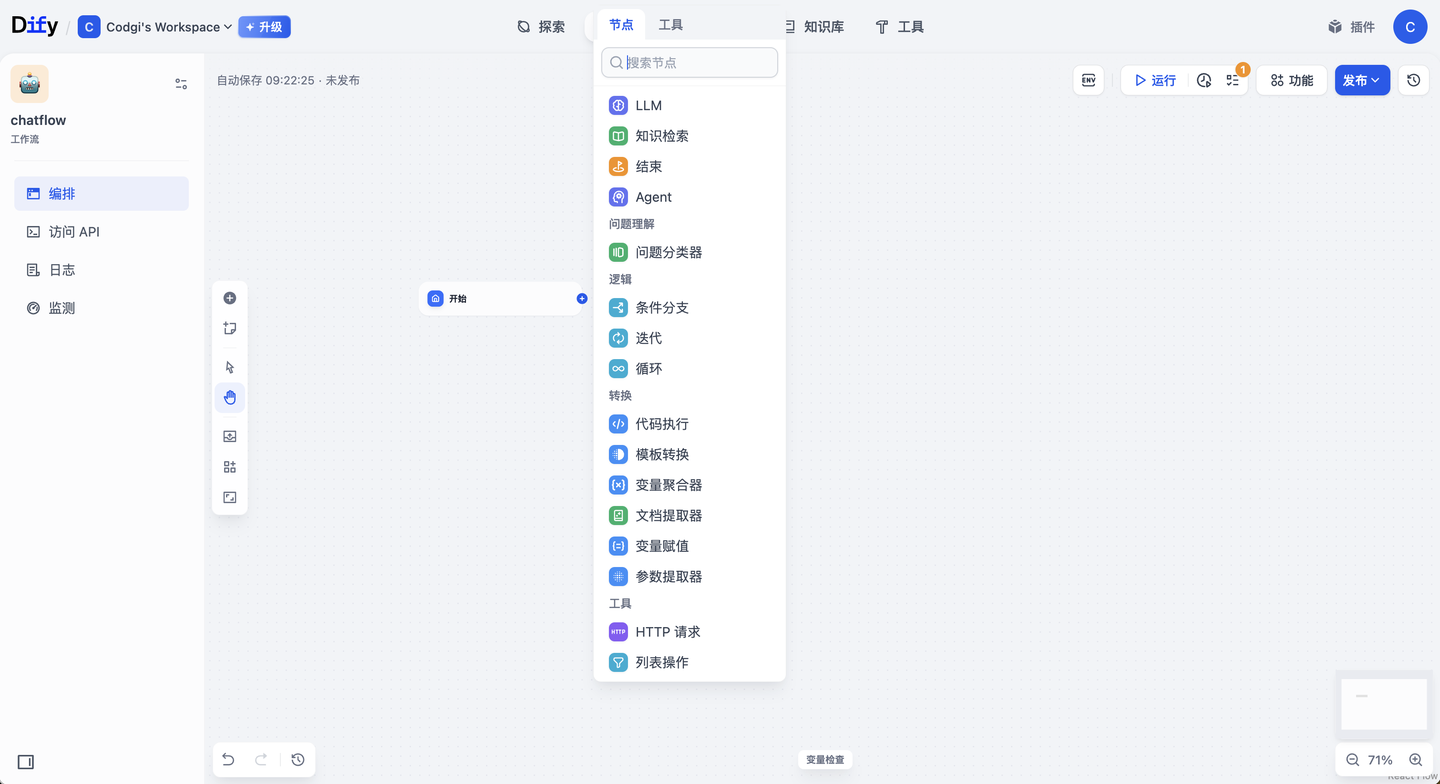

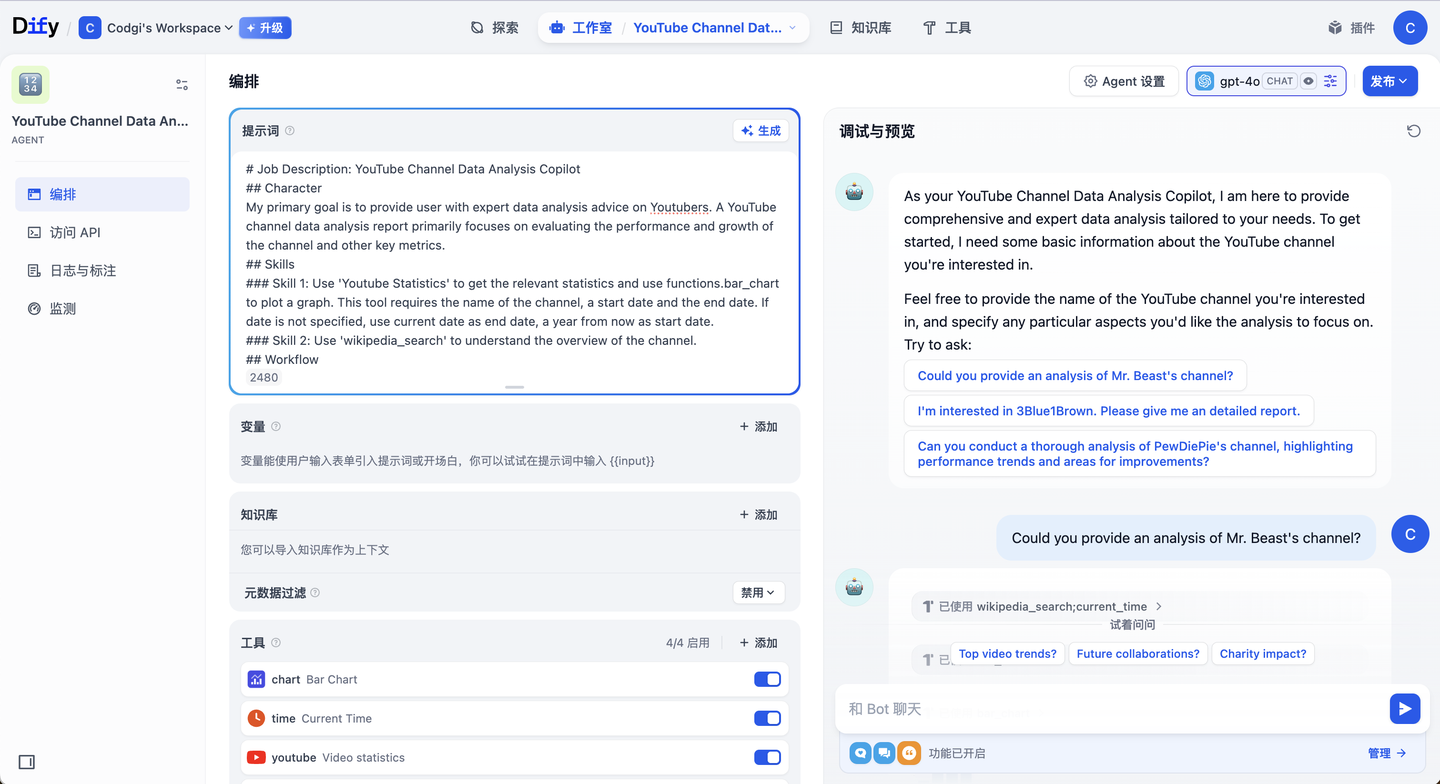

6.3 Dify

Positioning: Low-code AI application platform, focused on fast delivery.

Strengths:

- Quick to learn, easy model and tool integration — drag and drop to build a RAG app

- Good for medium-complexity applications

Weaknesses:

- Heavy-duty applications need careful complexity trade-offs

- Deep customization requires secondary development — e.g., custom retrieval strategies or complex Agent collaboration logic

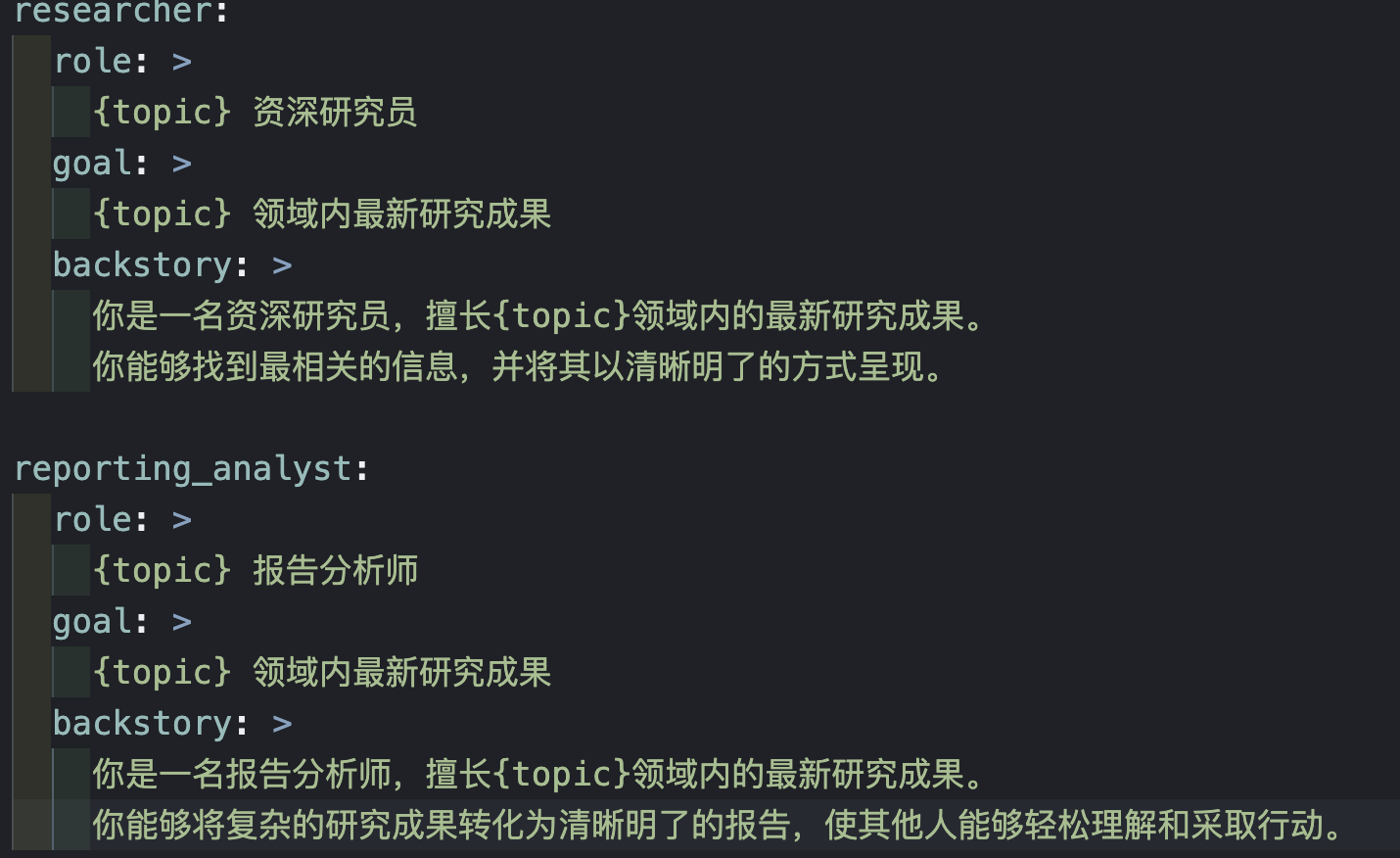

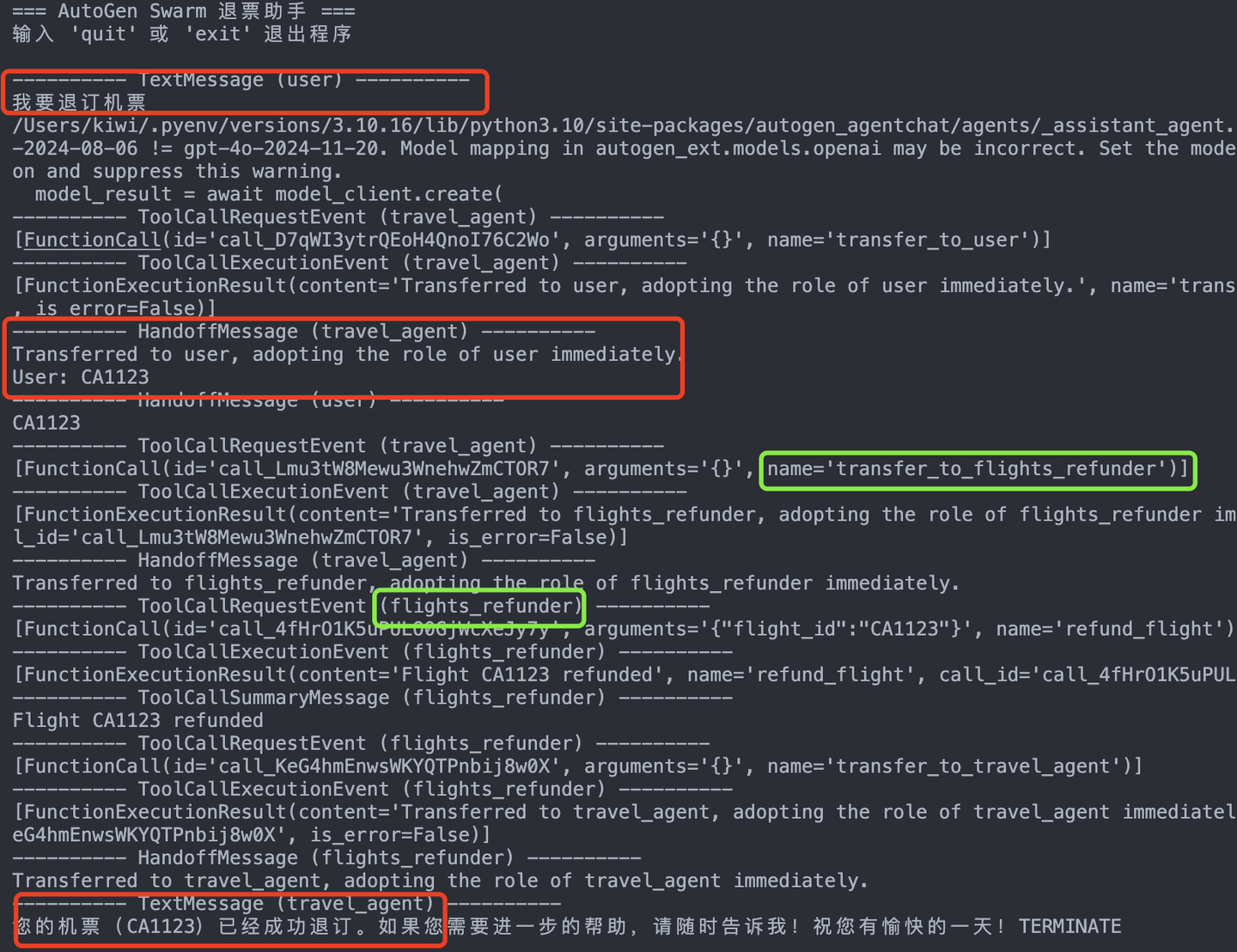

6.4 CrewAI

Positioning: Multi-agent collaboration framework, emphasizing role division.

Strengths:

- Rich ecosystem integrations

- Good for task exploration and collaboration — "Researcher" gathers info, "Analyst" summarizes, "Editor" polishes output

Weaknesses:

- Certain capabilities (like code sandboxing) need to be added separately

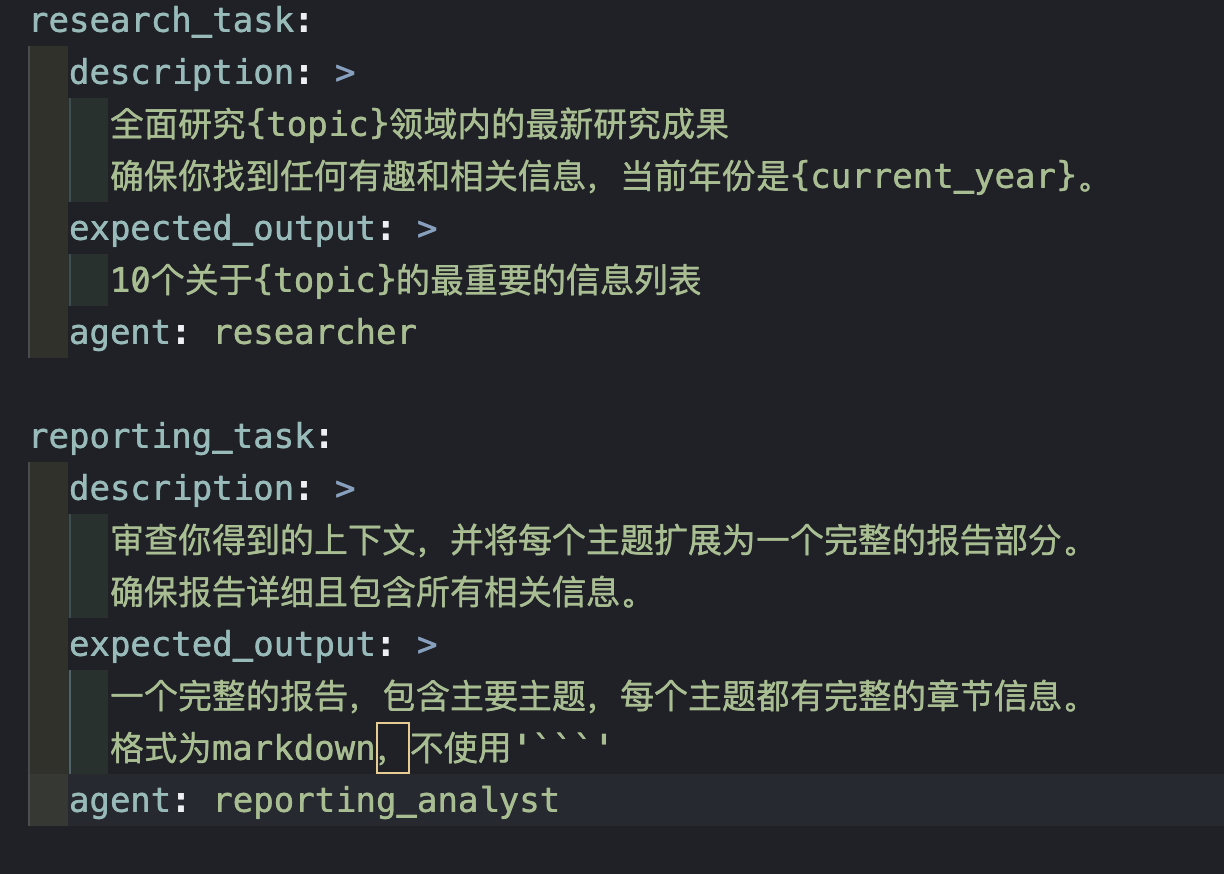

6.5 AutoGen

Positioning: Microsoft's open-source multi-agent conversation framework, emphasizing collaboration and observability.

Strengths:

- Native multi-agent support

- Flexible conversation flow control

Weaknesses:

- Ecosystem is still maturing, docs and examples lag behind established frameworks — but Microsoft is investing heavily, and the 2025 update cadence has clearly accelerated

7. Summary

Before picking a framework, answer one question: is your scenario a Workflow or an Agent?

Workflows fit "enumerable, predictable, controllable" processes; Agents fit "dynamic decision-making, cross-system collaboration, clarification and negotiation" complex problems.

For production Agents, our recommendation is LangGraph first — controllability and debuggability matter more than flexibility in production. For quick idea validation, Dify has the fastest onboarding. For multi-Agent collaboration research, both CrewAI and AutoGen are worth exploring.

The final choice comes down to three factors: task shape (Workflow vs Agent), team capability (do you have AI engineers?), and operating cost (Agent API calls typically run 3-5x the cost of Workflows).

📚 相关资源

❓ 常见问题

关于本章主题最常被搜索的问题,点击展开答案

我的项目到底该用 Workflow 还是 Agent?怎么判断?

经验法则:80% 的场景用 Workflow 就够了。判断标准 —— 步骤是否能完全穷举?路径分支是否有限?能否提前画完整的流程图?如果都是 yes,用 Workflow(更稳、更便宜、更好控)。只有当问题路径无法预设、需要在对话中澄清、需要跨多系统动态组合(如 "帮我查这个客户的退款进度")时,才上 Agent。很多团队一上来就做 Agent 结果调试困难、成本高、可控性差,最后退回 Workflow 才稳定。

AutoGPT、LangGraph、Dify、CrewAI、AutoGen 这五个框架生产环境该选哪个?

生产首选 LangGraph —— 图结构编排、流程可观测、可调试、支持持久化状态,每个节点做什么和怎么跳转都明确。AutoGPT 高自治但成本高、可控性弱,适合探索原型不建议直接生产。Dify 低代码上手快,适合快速验证 MVP 或团队没专职 AI 工程师。CrewAI 适合多 Agent 角色协作场景。AutoGen 微软背书长期看好,但 2025 年文档和案例还在追赶。本章作者团队的实战经验:可控性和可调试性在生产中比灵活性更重要。

Agent 比 Workflow 贵多少?什么时候这个成本值得?

Agent 的 API 调用费用通常是 Workflow 的 3-5 倍。本章一个真实案例:电商客服从规则引擎(200+ 条规则)切到 Agent 模式(Planner + 工具 Agent + Policy Agent + 执行 Agent),规则维护成本降 70%、长尾问题解决率从 45% 升到 82%,但 API 调用成本增加约 3 倍。trade-off 取决于业务价值 —— 长尾覆盖 + 维护省人力能否覆盖多出来的 token 费?标准流程显然不值,复杂客服 / 多系统协查这类场景才值。

AutoGPT 跑长任务为什么容易跑歪?

上下文管理跟不上。本章作者测试一个 10 步任务,AutoGPT 到第 7 步时模型已经 "忘了" 最初的目标 —— 因为每一步的 reasoning + tool output 都堆进 context window,越往后越稀释最初的指令。一个复杂任务动辄消耗 10K+ tokens 也是这原因。解决要么换 LangGraph 这种有显式 state 管理的框架,要么定期 summarize 已完成步骤、释放 context 空间,要么把任务拆成多个独立 agent run(每次都从干净 context 开始)。

客服系统从规则引擎切到多 Agent 协作具体怎么拆?

本章电商客服真实案例分四个角色:(1) Planner —— 拆意图并澄清("你是要查物流还是申请退款?");(2) 工具 Agent —— 各自调物流 / 支付 / CRM 的 API;(3) Policy Agent —— 推理合规政策("这单在 7 天无理由退货期内吗?");(4) 执行 Agent —— 操作工单与闭环。这种拆分的核心是按 "决策性质" 而不是 "功能模块" 划分 —— 每个 Agent 拿到的 context 干净、职责单一,比单 Agent 塞 200+ 规则可维护得多。