Agent Framework Comparison

Agent Framework Comparison

This article compares the current mainstream Agent frameworks: LangChain, LangGraph, AutoGen, CrewAI, smolagents, OpenAI Swarm, and OpenManus. It covers positioning, features, use cases, and selection advice to help you make fast technical decisions.

1. LangChain

Positioning: General-purpose LLM application framework with modular wrappers for Prompts, Memory, Tools, and Agents. Strengths: Mature ecosystem, rich tooling, great for rapid prototyping. Limitations: Complex flow orchestration requires extra control logic; multi-step controllability is average. Best for: Single-agent scenarios that need fast integration with tools and data sources.

2. LangGraph

Positioning: Graph-based Agent orchestration framework focused on state management and controllable flow. Strengths: Supports branching/looping, human-in-the-loop, observable and recoverable. Limitations: Steeper learning curve, relatively limited autonomy. Best for: Complex workflows that need explicit control over execution paths.

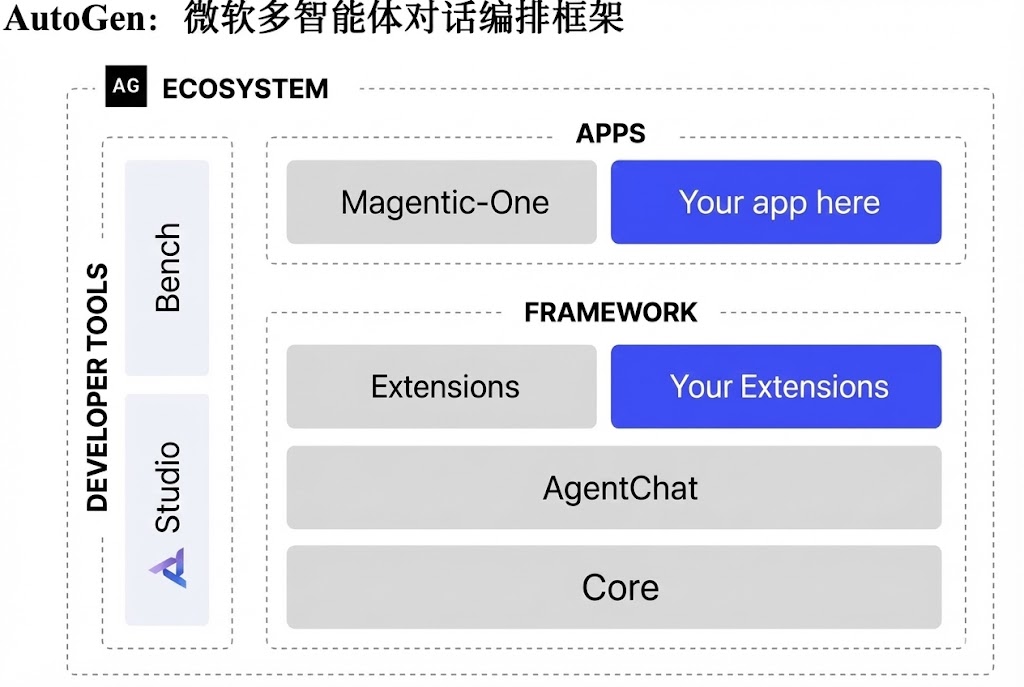

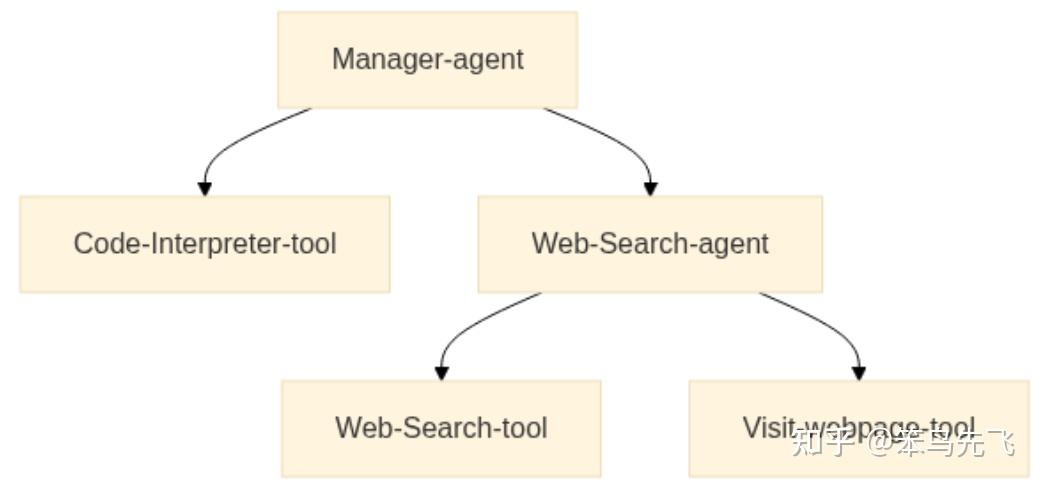

3. AutoGen

Positioning: Microsoft's open-source multi-agent conversation framework, emphasizing dialog-driven collaboration. Strengths: Native multi-agent support, conversational collaboration, extensible. Limitations: High debugging cost, ecosystem maturity still growing. Best for: Multi-role collaboration, research, and exploratory tasks.

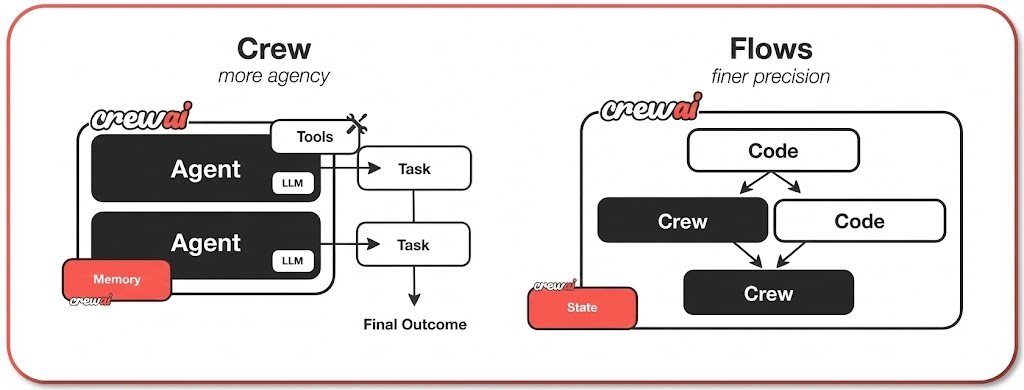

4. CrewAI

Positioning: Team-style multi-agent orchestration framework. Strengths: Clear role division, YAML-friendly configuration, lots of tool integrations. Limitations: Complex scenarios still need manual tool and flow supplementation. Best for: Task collaboration with clear process definition across multiple agents.

5. smolagents

Positioning: Minimalist framework built around "Code as Actions." Strengths: Lightweight, fast to pick up, lets the model write code to call tools directly. Limitations: Smaller ecosystem, complex flows require DIY infrastructure. Best for: Quick experiments, teaching, and lightweight projects.

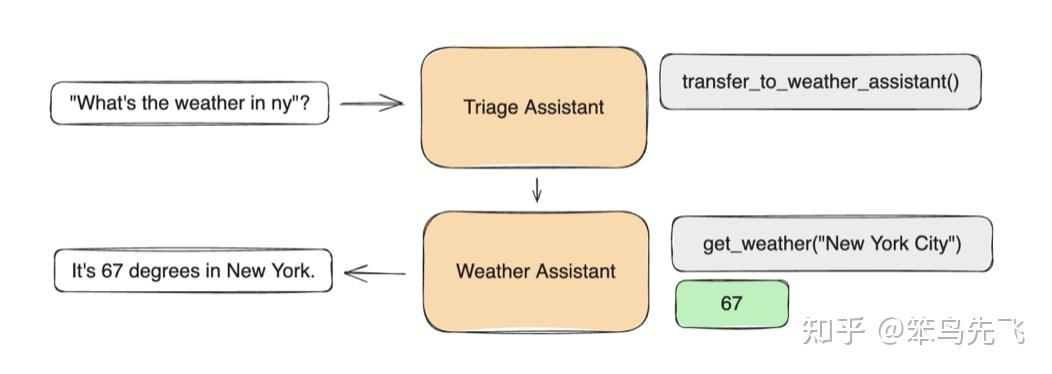

6. OpenAI Swarm

Positioning: Lightweight multi-agent collaboration framework emphasizing clear role division and handoffs. Strengths: Simple structure, quick to build multi-agent collaboration flows. Limitations: Narrow feature scope, complex flows need extension work. Best for: Lightweight multi-agent collaboration and PoC projects.

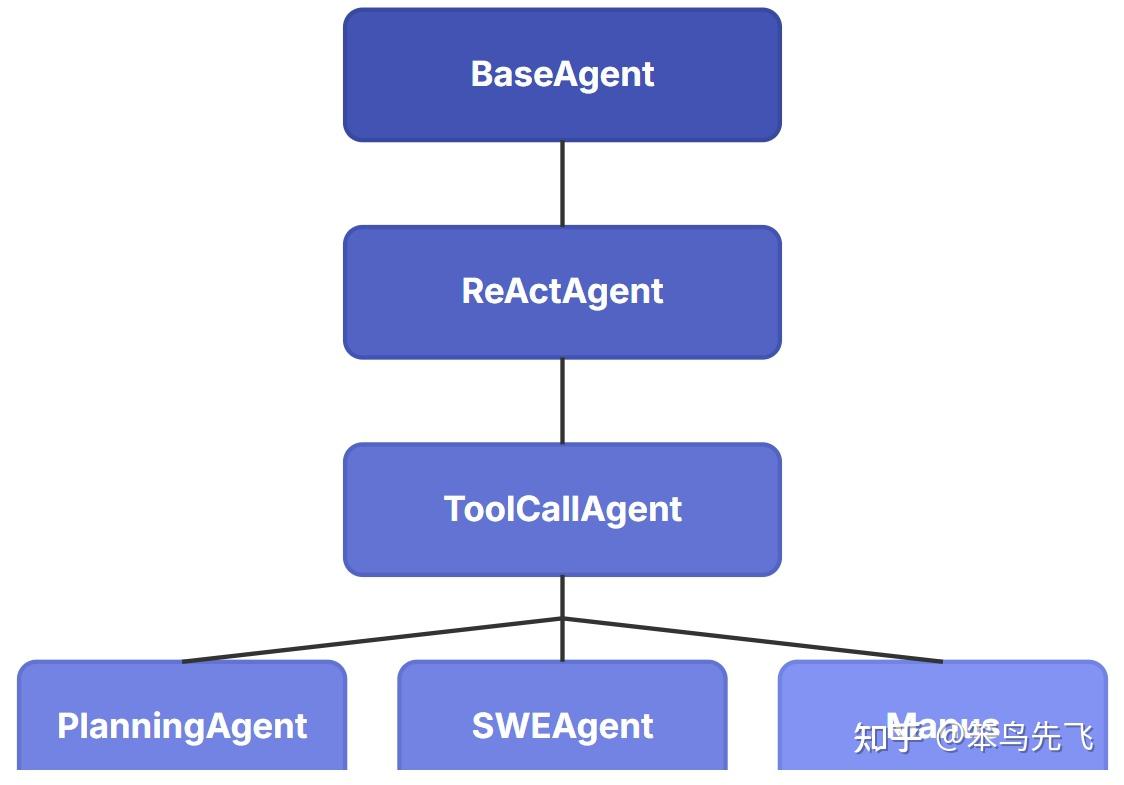

7. OpenManus

Positioning: Engineering-focused Agent framework geared toward systematic production deployment. Strengths: Covers multi-role, multi-step, and runtime governance. Limitations: Higher onboarding cost, requires solid engineering background. Best for: Enterprise-grade Agent engineering deployments.

8. Key Dimensions at a Glance

| Dimension | LangChain | LangGraph | AutoGen | CrewAI | smolagents | OpenAI Swarm | OpenManus |

|---|---|---|---|---|---|---|---|

| Learning Curve | Low-Med | Med-High | High | Med | Low | Low | Med-High |

| Controllability | Med | High | Med | Med | Low | Med | High |

| Autonomy | Med | Med | High | Med | Med | Med | Med |

| Multi-Agent | Med | High | High | High | Low | Med | High |

| Ecosystem Maturity | High | Med | Med | Med | Low | Low | Med |

| Scale Fit | Med | Med-Large | Med-Large | Med | Small | Small-Med | Large |

Quick note: if controllability and observability matter most, go with LangGraph. If ecosystem breadth and fast shipping matter most, go with LangChain.

9. Selection Recommendations

| Goal | Recommended Framework |

|---|---|

| Quick start, mature ecosystem | LangChain |

| Complex flow, controllability first | LangGraph |

| Multi-agent conversation | AutoGen |

| Role division & collaboration | CrewAI |

| Minimal experiments & teaching | smolagents |

| Lightweight multi-agent collab | OpenAI Swarm |

| Production engineering deployment | OpenManus |

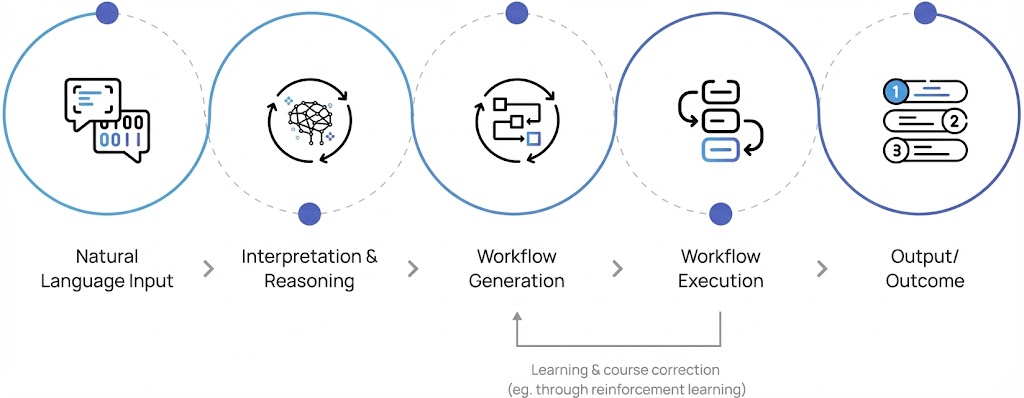

10. When to Use Agents

- Problem paths can't be enumerated; dynamic decision-making is required.

- Tasks span multiple systems and need multi-tool collaboration.

- Conversations require clarification, negotiation, and closed-loop execution.

When these conditions are met, go with an Agent framework. Otherwise, Workflows are more stable and cheaper.

11. Selection Flowchart (Simplified)

- Can you enumerate all paths? Yes -> Workflow. No -> proceed to Agent.

- Do you need strong controllability and audit trails? Yes -> LangGraph / OpenManus.

- Is this multi-role collaboration? Yes -> AutoGen / CrewAI / LangGraph.

- Is this a rapid prototype? Yes -> LangChain / smolagents / Swarm.

12. Common Mistakes

- Jumping straight to multi-agent: Multi-agent is expensive. Validate value with a single agent first.

- Chasing "autonomy" only: Without controllability you'll get production incidents. Add audit and rate limiting.

- Ignoring data and tool quality: Agent quality = model x data x tool quality. The model is just one piece.

13. Deployment Advice (AI Engineer Perspective)

- Workflows first, Agents second: Lock down deterministic processes first, then shrink the uncontrolled surface area.

- Stabilize the tool layer first: APIs must be reliable, permissions minimal, errors retryable.

- Add observability and replay: Log decisions, tool calls, and key inputs/outputs.

- Human-in-the-loop fallback: Add manual confirmation or rollback strategies at critical steps.

📚 相关资源

❓ 常见问题

关于本章主题最常被搜索的问题,点击展开答案

LangChain 和 LangGraph 都是同一家出的,到底差在哪?

LangChain 是通用 LLM 应用开发框架,模块化封装 Prompt、Memory、Tools、Agent,生态最成熟、上手最快、单 agent 场景适合;LangGraph 是图结构编排框架,强调状态管理、可控流程、支持分支 / 循环 / 人机协作 / 可观测与恢复。学习成本:LangChain 低-中、LangGraph 中-高。生产判断:流程简单 + 想快上线 → LangChain;流程复杂 + 需要明确控制路径 + 多 agent 协作 → LangGraph。两者可以混用,LangGraph 也兼容 LangChain 的工具生态。

smolagents 的 "Code as Actions" 是什么意思?

smolagents 让模型直接写代码来调用工具,而不是输出结构化的 tool call JSON。模型生成一段 Python 代码("先调 search('xxx'),再用 result 喂给 summarize()"),框架负责跑代码。优势是轻量、上手快、灵活 —— 模型用代码逻辑天然能表达循环、条件、组合调用,比 JSON schema 表达力强。劣势是生态相对小、复杂流程需自己搭建、安全风险更高(执行任意代码)。适合快速实验、教学、轻量项目;不适合企业生产。

OpenAI Swarm 和 CrewAI 都做多 Agent,怎么选?

Swarm 极简轻量,强调清晰的角色分工与 handoff(一个 Agent 把对话直接交给另一个 Agent),适合 PoC 和快速搭建几个 Agent 协作的场景;CrewAI 类团队协作模型,YAML 配置友好、集成工具丰富、角色分工更细致,适合任务协作流程明确的中等规模场景。学习成本两者都低-中。Swarm 功能范围窄、复杂场景需要扩展;CrewAI 在复杂场景仍需手动补齐工具与流程。生产规模大可考虑 LangGraph 或 OpenManus。

OpenManus 适合什么场景?为什么上手成本高?

OpenManus 面向工程化 Agent 落地,覆盖多角色、多步骤、运行时治理(如 audit、限流、回滚)。学习成本中-高、可控性高、适用规模大 —— 是企业级 Agent 部署的选择。上手难是因为它要求一定工程基础:要懂运行时治理、状态机、多角色协作的设计。如果你只是做 PoC 或单 Agent 任务,OpenManus 杀鸡用牛刀;只有当你已经踩过 LangChain / Swarm 的坑、需要更系统的工程化能力时再上。

选 Agent 框架最常见的三个误区是什么?

三大误区:(1) 一上来就多 Agent —— 多 Agent 成本高、调试难,应该先用单 Agent 验证价值再拆;(2) 只追求 "自治" —— 缺乏可控性会导致线上事故,必须加审计和限流,再 "聪明" 的 Agent 都得有 kill switch;(3) 忽略数据与工具质量 —— Agent 质量 = 模型 × 数据 × 工具质量,模型只是其中一环,工具 API 不可靠 / 文档烂 / 权限不对,模型再强也救不回来。落地建议:先 Workflow 后 Agent、工具层先稳定、加观测与回放、关键步骤人机协作兜底。