Context Engineering

Why Context Engineering determines AI Agent stability and controllability

TL;DR

Context Engineeringis the key to makingAI Agentsexecute reliably: it covers system prompts, tool instructions, memory, constraints, and observability -- not just "writing a better prompt."- Most failures aren't because the model isn't smart enough. They're because the context design is unclear: silent skips, inconsistent state, wrong tool usage, missing error handling.

- The most effective iteration cycle:

deploy -> observe -> refine -> test, and crystallize behavioral data into reusable rules and rubrics.

Core Concepts

Context Engineering means systematically designing and iterating on the input context around an AI Agent to make it more reliable and controllable in complex tasks. Compared to single-call LLM "prompt engineering," it puts more emphasis on:

System prompt: Define role, boundaries, allowed/forbidden actions, output formatTask constraints: Completion criteria, quality standards, skip rules, retry rulesTool descriptions: When to use which tool, how to fill parameters, how to handle errorsMemory management: How to persist state, what needs long-term storage, how to compress contextError handling: Retry/fallback after failure, and when to escalate to human-in-the-loop

How to Apply

Think of Context Engineering as an engineering feedback loop:

- Write the goal as a verifiable definition (what good looks like)

- Write the execution process as an observable state machine (plan/status/log)

- Use tools to make key steps verifiable (especially for facts and external writes)

- Bake failure modes into the system prompt (e.g., no silent skips, must log justification)

- Keep replaying and reviewing with real tasks (turn "random bugs" into explicit rules)

Case Study: Deep Research Agent

These two issues are extremely common -- pretty much every Deep Research Agent runs into them:

Issue 1: Incomplete Task Execution

Symptom: The orchestrator plans 3 search tasks but only executes 2. The third gets silently skipped.

Root cause: The system prompt didn't spell out "task completion rules," so the agent assumed the task was redundant or unnecessary.

Fix (pick one):

- Flexible: Allow skipping, but require justification and status update

- Strict: All planned tasks must be executed

(Example rules -- English terms kept for direct reuse)

You are a deep research agent responsible for executing comprehensive research tasks.

TASK EXECUTION RULES:

- For each search task you create, you MUST either:

1. Execute a web search and document findings, OR

2. Explicitly state why the search is unnecessary and mark it as completed with justification

- Do NOT skip tasks silently or make assumptions about task redundancy

- If you determine tasks overlap, consolidate them BEFORE execution

- Update task status in the spreadsheet after each action

Issue 2: Lack of Debugging Visibility

Symptom: Without logs and state tracking, you can't tell why the agent made certain decisions, which makes iteration nearly impossible.

Fix: Use a simple task tracker (spreadsheet / file) to force observability:

- Task ID

- Search query

- Status (

todo/in_progress/completed) - Results summary

- Timestamp

Self-check Rubric

- Have you eliminated prompt ambiguity (are instructions actionable and verifiable)?

- Have you clearly specified required vs. optional actions?

- Do you have observability (logs, state, tool call replay)?

- Have you covered error cases (retry on failure, partial failure, human escalation)?

- Does the agent behave consistently given the same input (behavioral consistency)?

Practice

Exercise: Write a Context Engineering checklist for a "weekly market research agent."

- Must include: plan format, status value constraints, tool usage rules, failure retry rules, output schema.

- Bonus: Define a simple

evaluation rubric(e.g., 10-point scale: coverage / correctness / citations / actionability).

References

Original (English)

Context engineering is a critical practice for building reliable and effective AI agents. This guide explores the importance of context engineering through a practical example of building a deep research agent.

Context engineering involves carefully crafting and refining the prompts, instructions, and constraints that guide an AI agent's behavior to achieve desired outcomes.

What is Context Engineering?

Context engineering is the process of designing, testing, and iterating on the contextual information provided to AI agents to shape their behavior and improve task performance. Unlike simple prompt engineering for single LLM calls, context engineering for agents involves (but not limited to):

- System prompts that define agent behavior and capabilities

- Task constraints that guide decision-making

- Tool descriptions that clarify when and how to use available functions/tools

- Memory management for tracking state across multiple steps

- Error handling patterns for robust execution

Building a Deep Research Agent: A Case Study

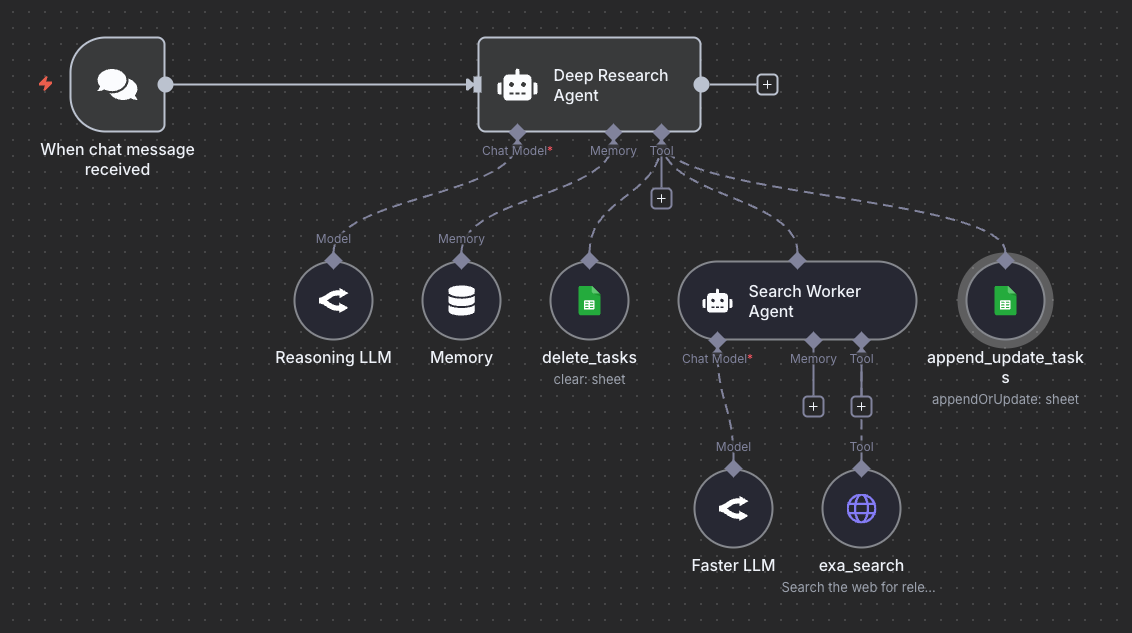

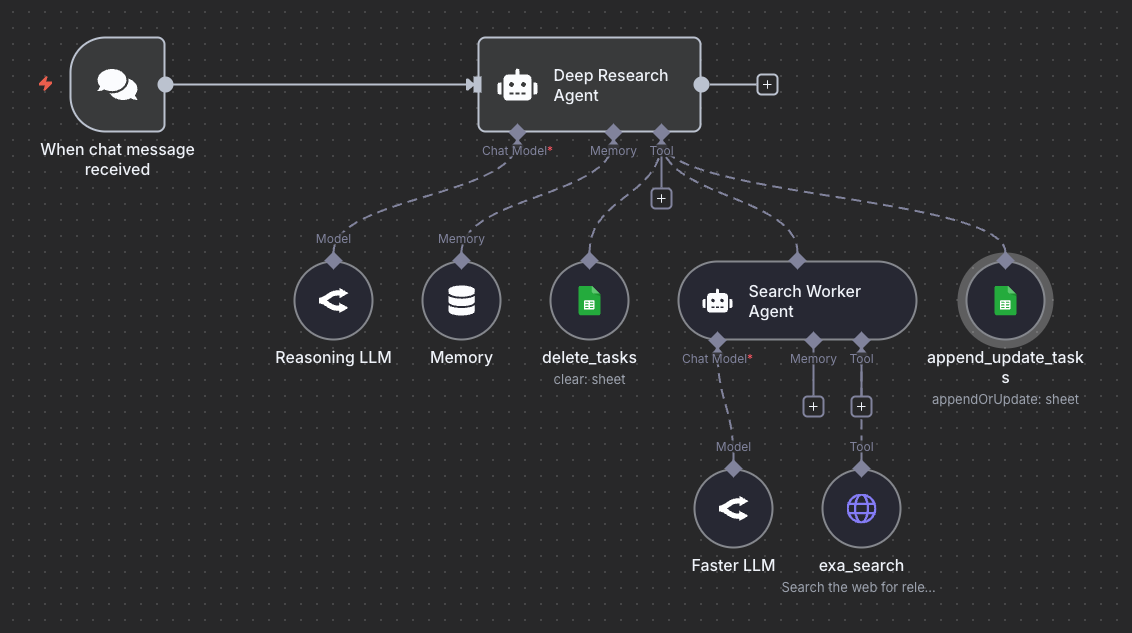

Let's explore context engineering principles through an example: a minimal deep research agent that performs web searches and generates reports.

The Context Engineering Challenge

When building the first version of this agent system, the initial implementation revealed several behavioral issues that required careful context engineering:

Issue 1: Incomplete Task Execution

Problem: When running the agentic workflow, the orchestrator agent often creates three search tasks but only executes searches for two of them, skipping the third task without explicit justification.

Root Cause: The agent's system prompt lacked explicit constraints about task completion requirements. The agent made assumptions about which searches were necessary, leading to inconsistent behavior.

Solution: Two approaches are possible:

- Flexible Approach (current): Allow the agent to decide which searches are necessary, but require explicit reasoning for skipped tasks

- Strict Approach: Add explicit constraints requiring search execution for all planned tasks

Example system prompt enhancement:

You are a deep research agent responsible for executing comprehensive research tasks.

TASK EXECUTION RULES:

- For each search task you create, you MUST either:

1. Execute a web search and document findings, OR

2. Explicitly state why the search is unnecessary and mark it as completed with justification

- Do NOT skip tasks silently or make assumptions about task redundancy

- If you determine tasks overlap, consolidate them BEFORE execution

- Update task status in the spreadsheet after each action

Issue 2: Lack of Debugging Visibility

Problem: Without proper logging and state tracking, it was difficult to understand why the agent made certain decisions.

Solution: For this example, it helps to implement a task management system using a spreadsheet or text file (for simplicity) with the following fields:

- Task ID

- Search query

- Status (todo, in_progress, completed)

- Results summary

- Timestamp

This visibility enables:

- Real-time debugging of agent decisions

- Understanding of task execution flow

- Identification of behavioral patterns

- Data for iterative improvements

Context Engineering Best Practices

Based on this case study, here are key principles for effective context engineering:

1. Eliminate Prompt Ambiguity

Bad Example:

Perform research on the given topic.

Good Example:

Perform research on the given topic by:

1. Breaking down the query into 3-5 specific search subtasks

2. Executing a web search for EACH subtask using the search_tool

3. Documenting findings for each search in the task tracker

4. Synthesizing all findings into a comprehensive report

2. Make Expectations Explicit

Don't assume the agent knows what you want. Be explicit about:

- Required vs. optional actions

- Quality standards

- Output formats

- Decision-making criteria

3. Implement Observability

Build debugging mechanisms into your agentic system:

- Log all agent decisions and reasoning

- Track state changes in external storage

- Record tool calls and their outcomes

- Capture errors and edge cases

Warning: Pay close attention to every run of your agentic system. Strange behaviors and edge cases are opportunities to improve your context engineering efforts.

4. Iterate Based on Behavior

Context engineering is an iterative process:

- Deploy the agent with initial context

- Observe actual behavior in production

- Identify deviations from expected behavior

- Refine system prompts and constraints

- Test and validate improvements

- Repeat

5. Balance Flexibility and Constraints

Consider the tradeoff between:

- Strict constraints: More predictable but less adaptable

- Flexible guidelines: More adaptable but potentially inconsistent

Choose based on your use case requirements.

Advanced Context Engineering Techniques

Layered Context Architecture

Context engineering applies to all stages of the AI agent build process. Depending on the AI Agent, it's sometimes helpful to think of context as a hierarchical structure. For our basic agentic system, we can organize context into hierarchical layers:

- System Layer: Core agent identity and capabilities

- Task Layer: Specific instructions for the current task

- Tool Layer: Descriptions and usage guidelines for each tool

- Memory Layer: Relevant historical context and learnings

Dynamic Context Adjustment

Another approach is to dynamically adjust context based on the task complexity, available resources, previous execution history, and error patterns. Based on our example, we can adjust context based on:

- Task complexity

- Available resources

- Previous execution history

- Error patterns

Context Validation

Evaluation is key to ensuring context engineering techniques are working as they should for your AI agents. Before deployment, validate your context design:

- Completeness: Does it cover all important scenarios?

- Clarity: Is it unambiguous?

- Consistency: Do different parts align?

- Testability: Can you verify the behavior?

Common Context Engineering Pitfalls

Below are a few common context engineering pitfalls to avoid when building AI agents:

1. Over-Constraint

Problem: Too many rules make the agent inflexible and unable to handle edge cases.

Example:

NEVER skip a search task.

ALWAYS perform exactly 3 searches.

NEVER combine similar queries.

Better Approach:

Aim to perform searches for all planned tasks. If you determine that tasks are redundant, consolidate them before execution and document your reasoning.

2. Under-Specification

Problem: Vague instructions lead to unpredictable behavior.

Example:

Do some research and create a report.

Better Approach:

Execute research by:

1. Analyzing the user query to identify key information needs

2. Creating 3-5 specific search tasks covering different aspects

3. Executing searches using the search_tool for each task

4. Synthesizing findings into a structured report with sections for:

- Executive summary

- Key findings per search task

- Conclusions and insights

3. Ignoring Error Cases

Problem: Context doesn't specify behavior when things go wrong.

Solution: In some cases, it helps to add error handling instructions to your AI Agents:

ERROR HANDLING:

- If a search fails, retry once with a rephrased query

- If retry fails, document the failure and continue with remaining tasks

- If more than 50% of searches fail, alert the user and request guidance

- Never stop execution completely without user notification

Measuring Context Engineering Success

Track these metrics to evaluate context engineering effectiveness:

- Task Completion Rate: Percentage of tasks completed successfully

- Behavioral Consistency: Similarity of agent behavior across similar inputs

- Error Rate: Frequency of failures and unexpected behaviors

- User Satisfaction: Quality and usefulness of outputs

- Debugging Time: Time required to identify and fix issues

It's important to not treat context engineering as a one-time activity but an ongoing practice that requires:

- Systematic observation of agent behavior

- Careful analysis of failures and edge cases

- Iterative refinement of instructions and constraints

- Rigorous testing of changes

We will be covering these principles in more detail in upcoming guides. By applying these principles, you can build AI agent systems that are reliable, predictable, and effective at solving complex tasks.

Info: Learn how to build production-ready AI agents in our comprehensive course. Join now! Use code PROMPTING20 to get an extra 20% off.

📚 相关资源

❓ 常见问题

关于本章主题最常被搜索的问题,点击展开答案

Context Engineering 和 Prompt Engineering 不是一回事吗?

不是。本章定义:prompt engineering 关注单次 LLM 调用「写更好的一段话」;context engineering 围绕 agent 做系统化设计——system prompt、task constraints、tool descriptions、memory management、error handling 全在内。换句话说,prompt engineering 是其中一块,context engineering 还要管 agent 怎么记状态、怎么调工具、怎么处理失败。

Deep Research Agent 案例里的 silent skip 问题,根本原因是什么?

本章给的根因:system prompt 没把「任务完成规则」写清楚,agent 就自行假设有些 search 任务重复或不必要,跳过的时候不写 justification。修复有两条路:Flexible(允许跳过但必须写理由并更新状态)或 Strict(所有计划内任务必须执行完)。本章贴的 system prompt 增强示例就是把这条规则显式写进去。

Agent 行为不可控,最先该补哪一块?

可观测性。本章 case 2 直接说:「没有 log 与 state tracking,你很难知道 agent 为什么这么做,也就很难迭代。」最小可观测做法:搞个 task tracker(spreadsheet / 文本文件就够),每个任务带 Task ID、search query、status(todo/in_progress/completed)、results summary、timestamp。能回放才能修。

Over-constraint 和 under-specification,哪个错更常见?

本章两个都列了,但 under-specification 在新团队里更常见——「Do some research and create a report」这种 prompt 直接放生产,行为完全不可预测。Over-constraint 一般出现在被坑过几次以后:「NEVER skip a search」「ALWAYS perform exactly 3 searches」,结果 agent 在边界场景僵化。本章建议的折中:写「目标 + 允许的偏离 + 必须记录的理由」。

Context Engineering 该怎么衡量做得好不好?

本章给了 5 个指标:Task Completion Rate(任务完成率)、Behavioral Consistency(同类输入下行为一致性)、Error Rate(失败和异常频率)、User Satisfaction(输出质量与有用度)、Debugging Time(定位和修复一个问题要多久)。配合 deploy → observe → refine → test 闭环,每次 prompt 改动都跑一遍这五项,比「我感觉好像稳定了」可靠得多。