Llama 3

Llama 3 overview

TL;DR

- Llama 3 is a family of LLMs from Meta (8B and 70B, both pre-trained and instruction-tuned variants), commonly used as an open-weight general-purpose model base.

- Don't just look at benchmarks for model selection: also check context length, latency/cost, tool support, and your own evaluation results (ideally with a small regression set).

- If you're building RAG / agentic workflows: lock down the "answer + evidence" output format, and emphasize "no fabrication" in prompts.

Reading Guide

This page summarizes Llama 3's architecture highlights and performance comparisons, with links to further reading. Use it to:

- Understand Llama 3's core technical parameters (tokenizer, context length, post-training pipeline)

- Build a "candidate model" mental map (comparing with Gemma, Mistral, Gemini, Claude, etc.)

For production use, you should also:

- Write 10-50 evaluation samples for your business scenario (with acceptance criteria)

- Compare different models using the same prompts, tracking performance and cost

- Document failure modes (hallucination, format drift, tool misuse) and iterate on prompts and guardrails

Original (English)

Meta recently introduced their new family of large language models (LLMs) called Llama 3. This release includes 8B and 70B parameters pre-trained and instruction-tuned models.

Llama 3 Architecture Details

Here is a summary of the mentioned technical details of Llama 3:

- It uses a standard decoder-only transformer.

- The vocabulary is 128K tokens.

- It is trained on sequences of 8K tokens.

- It applies grouped query attention (GQA)

- It is pretrained on over 15T tokens.

- It involves post-training that includes a combination of SFT, rejection sampling, PPO, and DPO.

Performance

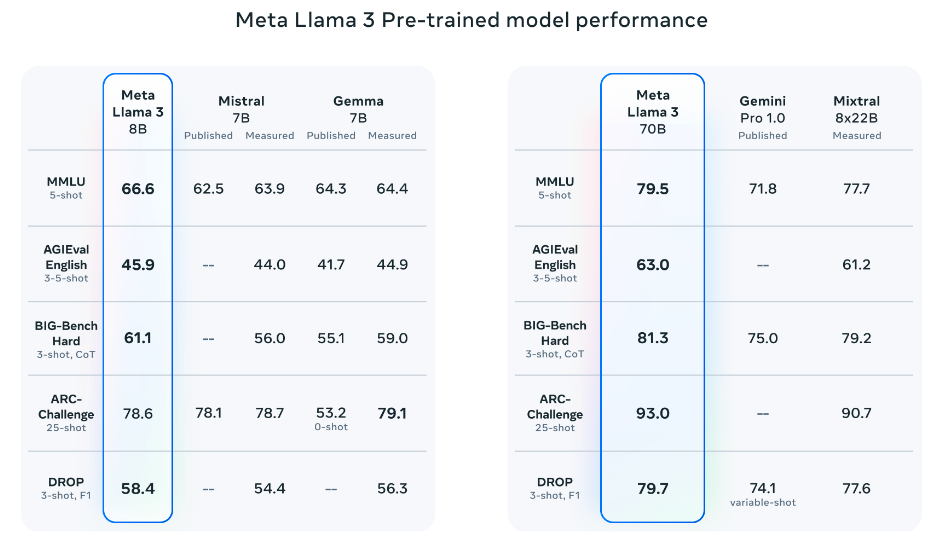

Notably, Llama 3 8B (instruction-tuned) outperforms Gemma 7B and Mistral 7B Instruct. Llama 3 70 broadly outperforms Gemini Pro 1.5 and Claude 3 Sonnet and falls a bit behind on the MATH benchmark when compared to Gemini Pro 1.5.

Source: Meta AI

Source: Meta AI

The pretrained models also outperform other models on several benchmarks like AGIEval (English), MMLU, and Big-Bench Hard.

Source: Meta AI

Source: Meta AI

Llama 3 400B

Meta also reported that they will be releasing a 400B parameter model which is still training and coming soon! There are also efforts around multimodal support, multilingual capabilities, and longer context windows in the pipeline. The current checkpoint for Llama 3 400B (as of April 15, 2024) produces the following results on the common benchmarks like MMLU and Big-Bench Hard:

Source: Meta AI

Source: Meta AI

The licensing information for the Llama 3 models can be found on the model card.

Extended Review of Llama 3

Here is a longer review of Llama 3:

https://www.youtube.com/embed/h2aEmciRd6U?si=m7-xXu5IWpB-6mE0