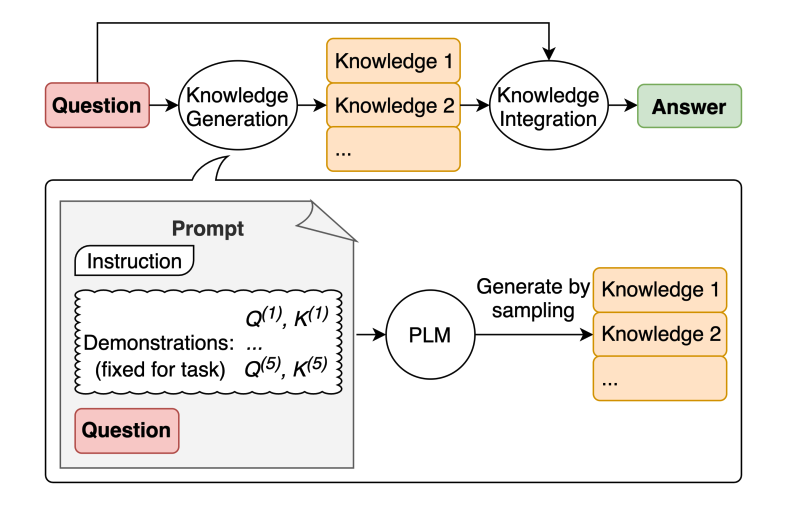

Generated Knowledge Prompting

Generate knowledge first, then answer (boosts commonsense / knowledge-intensive tasks)

Image source: Liu et al. 2022

LLMs keep getting better, and one popular technique is incorporating knowledge or information to help the model make more accurate predictions.

But here's the question: can the model also generate knowledge before making a prediction? That's what Liu et al. 2022 tried -- generate knowledge to use as part of the prompt. Specifically, how much does this help with tasks like commonsense reasoning?

Let's try a simple prompt:

Prompt:

Part of playing golf is trying to get a higher score than other people. Yes or no?

Output:

Yes.

Wrong. This exposes an LLM limitation -- tasks that need real-world knowledge trip it up. So how do we fix this with generated knowledge?

First, we generate some "knowledge":

Prompt:

Input: Greece is larger than Mexico.

Knowledge: Greece has an area of about 131,957 square kilometers, while Mexico has an area of about 1,964,375 square kilometers, making Mexico much larger than Greece.

Input: Glasses always fog up.

Knowledge: Glasses fog up when water vapor from your breath, sweat, or surrounding humidity condenses on a cooler lens surface, forming tiny droplets that look like a thin film.

Input: Fish can think.

Knowledge: Fish can show learning and memory abilities. Some fish demonstrate problem-solving and can retain information over time, which can help them navigate environments and social interactions.

Input: A common effect of smoking many cigarettes over a lifetime is a higher-than-normal chance of developing lung cancer.

Knowledge: Smoking increases the risk of lung cancer compared to never-smokers. The risk generally rises with greater smoking intensity and duration.

Input: A rock and a pebble are the same size.

Knowledge: In sedimentology (Udden-Wentworth scale), a pebble is typically 4 to 64 mm in diameter. Larger clasts (e.g., cobbles) are 64 to 256 mm, while smaller grains are below 4 mm.

Input: Part of playing golf is trying to get a higher score than other people.

Knowledge:

Knowledge 1:

The goal of golf is to complete a set of holes in as few strokes as possible. A round typically includes 18 holes. Each stroke counts toward your total, and the player with the lowest total number of strokes wins.

Knowledge 2:

Golf is a precision club-and-ball sport where players use various clubs to hit a ball into a series of holes on a course in as few strokes as possible. The objective is to finish with the lowest score, where score is the total number of strokes across all holes.

We used the prompt from Liu et al. 2022.

Next step: integrate the knowledge and get a prediction. I reformatted the question as QA format to guide the answer.

Prompt:

Question: Part of playing golf is trying to get a higher score than other people. Yes or no?

Knowledge: The goal of golf is to complete a set of holes in as few strokes as possible. A round typically includes 18 holes. Each stroke counts toward your total, and the player with the lowest total number of strokes wins.

Explanation and answer:

Answer 1 (very high confidence):

No. In golf, the objective is not to get a higher score. Instead, players aim to take as few strokes as possible. The lowest total number of strokes determines the winner.

Question: Part of playing golf is trying to get a higher score than other people. Yes or no?

Knowledge: Golf is a precision club-and-ball sport where players use various clubs to hit a ball into a series of holes on a course in as few strokes as possible. The objective is to finish with the lowest score, where score is the total number of strokes across all holes.

Explanation and answer:

Answer 2 (much lower confidence):

No. In golf, players try to take the fewest strokes possible. The winner is determined by the lowest total number of strokes, not a higher score.

Something interesting happened here. The first answer was very confident, while the second was less so. I've simplified the process for demonstration purposes -- there are more nuances when arriving at the final answer. Check the paper for the full details.

📚 相关资源

❓ 常见问题

关于本章主题最常被搜索的问题,点击展开答案

Generated Knowledge prompting 是什么?

Liu 等人 2022 提出,让 LLM 在回答之前先「生成知识」作为 prompt 的一部分。两步走:第一步用 few-shot 让模型针对输入产出相关知识陈述;第二步把生成的知识 + 原问题重新喂给模型。专门用于常识推理任务,弥补模型常识盲区。

Generated Knowledge 和 RAG 是同一类东西吗?

不是。RAG 从外部数据源(向量库、维基)检索真实文档;Generated Knowledge 让 LLM 自己生成知识——本质是激活模型自己的参数化知识。前者抗幻觉强(信息可溯源),后者无需向量库但可能生成错误事实。两者也能结合:先 RAG 拉真实知识,再用模型扩写。

什么时候用 Generated Knowledge?

常识题、需要简单世界知识但不要求严格事实溯源的场景。论文示例:「打高尔夫的目标是分数比别人高吗?」直接问 LLM 答 Yes(错),先让它生成「高尔夫规则:杆数越少越好」再答就能纠正。也适合中小模型——大模型常识够强时收益变小。

Generated Knowledge 的「知识置信度」怎么影响答案?

原论文有个关键观察:同一个问题用两条不同的生成知识,模型给出的最终答案置信度差异明显——更精确、更聚焦的知识(如直接说「最少杆数获胜」)让模型答得更确信,模糊的知识让模型犹豫。最终决策需要结合多条知识聚合,而不是只用一条。

Generated Knowledge 怎么和 CoT 结合?

标准做法:先生成知识 → 拼接到 prompt → 让模型走 CoT 推理。比单纯 CoT 强在「知识层」被显式写出来,模型可以把它当作前提引用,避免凭空假设。也可以反过来——先 CoT 推理发现哪一步缺信息,再针对性生成那部分知识,做两轮迭代。