ART

Automatic Reasoning and Tool-use: automate the tool-use pipeline

Interleaving CoT prompting with tools has proven to be both powerful and robust for LLM tasks. But these approaches typically require hand-crafted, task-specific demonstrations and carefully scripted tool integration. Paranjape et al. (2023) proposed a new framework that uses a frozen LLM to automatically generate programs with intermediate reasoning steps.

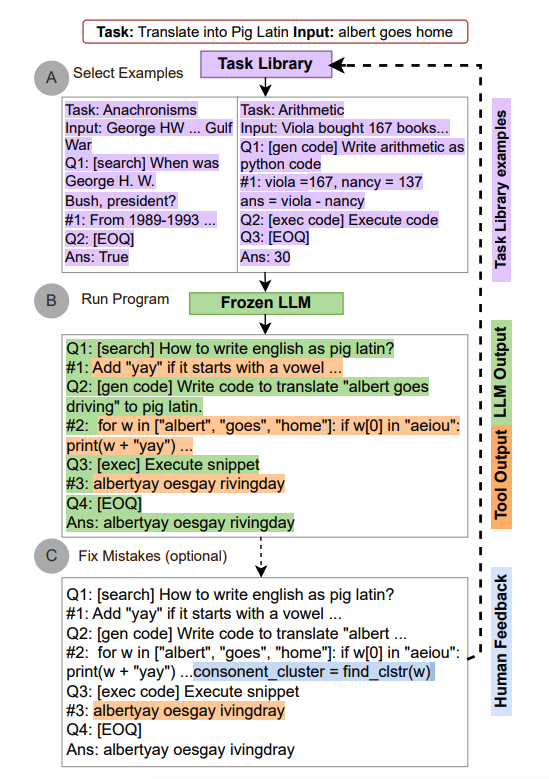

Here's how ART works:

- When given a new task, it selects demonstrations of multi-step reasoning and tool use from a task library.

- At test time, it pauses generation when external tools need to be called, integrates the tool output, then resumes generating.

ART guides the model to generalize from demonstrations, decompose new tasks, and use tools at the right moments. It works in a zero-shot fashion. And it's extensible -- you can manually update the task and tool libraries to fix reasoning errors or add new tools. The process looks like this:

Image source: Paranjape et al. (2023)

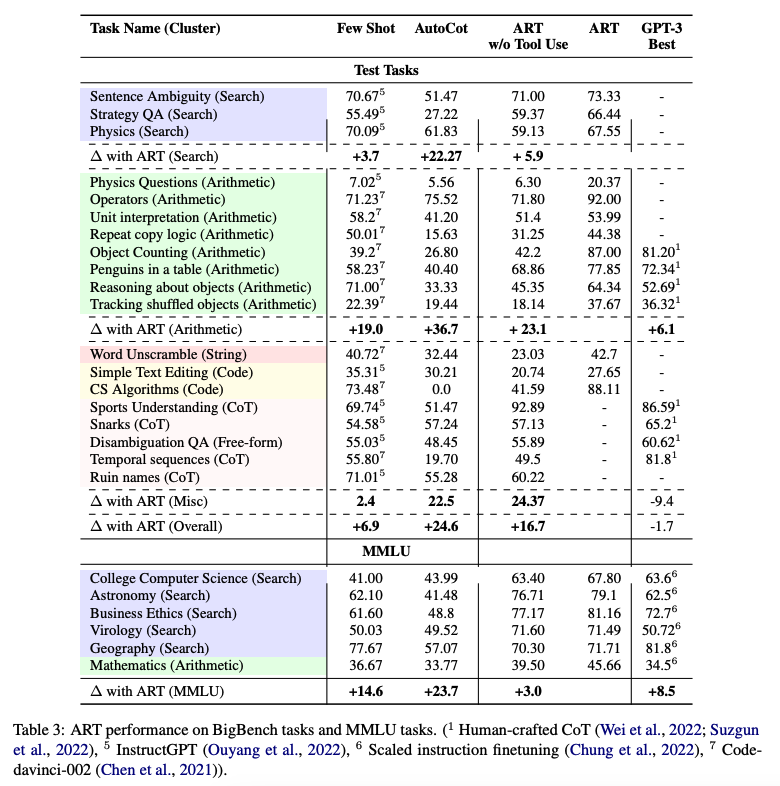

On BigBench and MMLU benchmarks, ART significantly outperformed few-shot prompting and automatic CoT on unseen tasks. With human feedback, it even beat hand-crafted CoT prompts.

Here's the performance table on BigBench and MMLU tasks:

Image source: Paranjape et al. (2023)