Prompt Chaining

Break tasks into steps and generate incrementally for more controllable output

Introduction

To make LLMs more reliable, one key prompting technique is breaking tasks into subtasks. Once you've identified the subtasks, you feed each subtask's prompt to the model and use the result as part of the next prompt. That's prompt chaining -- decomposing a task into subtasks and creating a series of prompt operations.

https://www.youtube.com/embed/CKZC5RigYEc?si=EG1kHf83ceawWdHX

Prompt chaining can handle really complex tasks that an LLM might struggle with using a single detailed prompt. In a chain, each step transforms or processes the generated response until you reach the desired result.

Beyond performance, prompt chaining also improves transparency, controllability, and reliability. You can pinpoint where the model goes wrong and improve performance at specific stages.

It's especially useful for building LLM-powered conversational assistants and creating personalized user experiences.

Prompt Chaining Examples

Prompt Chaining for Document QA

Prompt chains work great for scenarios involving multiple operations or transformations. For example, a common LLM use case is answering questions based on large text documents. You can design two prompts: the first one extracts relevant quotes to answer the question, and the second one uses those quotes plus the original document to generate the actual answer.

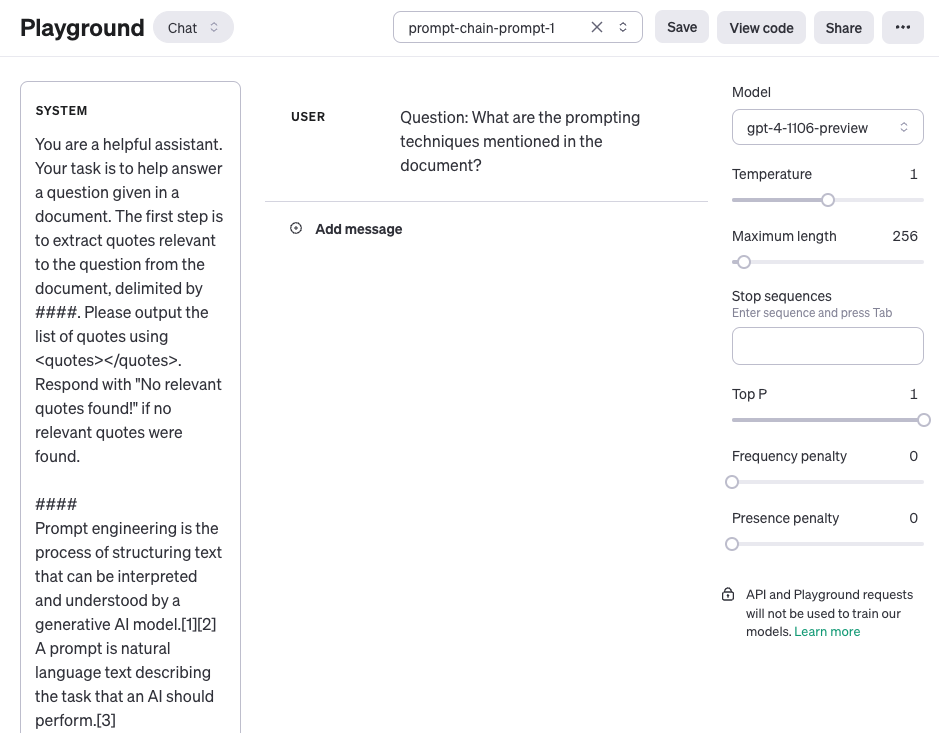

The first prompt below extracts relevant quotes from a document based on a question. For simplicity, we've added a placeholder {{document}}. To test this, you could copy-paste a Wikipedia article, like the one on Prompt Engineering. Since this uses a long context, we went with OpenAI's gpt-4-1106-preview. You can also use other long-context LLMs like Claude.

Prompt 1:

You are a helpful assistant. Your task is to answer a question based on a document.

Step 1: Extract quotes that are relevant to the question from the document delimited by ####.

Output the quote list using <quotes></quotes>. If you cannot find any relevant quotes, respond with "No relevant quotes found!".

####

{{document}}

####

Here's a screenshot of the full prompt, including the question passed via the user role.

Prompt 1 output:

<quotes>

- Chain-of-thought (CoT) prompting[27]

- Generated knowledge prompting[37]

- Least-to-most prompting[38]

- Self-consistency decoding[39]

- Complexity-based prompting[41]

- Self-refine[42]

- Tree-of-thought prompting[43]

- Maieutic prompting[45]

- Directional-stimulus prompting[46]

- Textual inversion and embeddings[59]

- Using gradient descent to search for prompts[61][62][63][64]

- Prompt injection[65][66][67]

</quotes>

The quotes returned from the first prompt now become input for the second prompt. You could clean them up -- say, remove citation markers -- using another prompt in the chain. But for now, let's skip that. The second prompt takes the extracted quotes and generates a helpful answer based on both the document and the quotes:

Prompt 2:

Using the relevant quotes extracted from the document (delimited by <quotes></quotes>) and the original document (delimited by ####), write an answer to the question. Make sure the answer is accurate, friendly, and helpful.

####

{{document}}

####

<quotes>

- Chain-of-thought (CoT) prompting[27]

- Generated knowledge prompting[37]

- Least-to-most prompting[38]

- Self-consistency decoding[39]

- Complexity-based prompting[41]

- Self-refine[42]

- Tree-of-thought prompting[43]

- Maieutic prompting[45]

- Directional-stimulus prompting[46]

- Textual inversion and embeddings[59]

- Using gradient descent to search for prompts[61][62][63][64]

- Prompt injection[65][66][67]

</quotes>

Prompt 2 output:

The prompt techniques mentioned in the document include:

1. Chain-of-thought (CoT) prompting[27]

2. Generated knowledge prompting[37]

3. Least-to-most prompting[38]

4. Self-consistency decoding[39]

5. Complexity-based prompting[41]

6. Self-refine[42]

7. Tree-of-thought prompting[43]

8. Maieutic prompting[45]

9. Directional-stimulus prompting[46]

10. Textual inversion and embeddings[59]

11. Using gradient descent to search for prompts[61][62][63][64]

12. Prompt injection[65][66][67]

Each technique applies a distinct strategy to improve or constrain interactions with large language models to produce desired outputs.

As you can see, simplifying and creating prompt chains is a useful approach when responses need multiple operations or transformations. As an exercise, try designing a prompt that strips the citation markers (e.g., [27]) from the response before sending it to the end user.

You can also find more prompt chaining examples in this documentation, which uses the Claude LLM. Our examples were inspired by and adapted from theirs.

📚 相关资源

❓ 常见问题

关于本章主题最常被搜索的问题,点击展开答案

Prompt Chaining 是什么?为什么不用一个长 prompt 搞定?

Prompt chaining 是把任务拆成多个子任务,每步一个 prompt,前一步输出作为后一步输入。一个超长 prompt 在复杂任务上 LLM 可能无法可靠完成;分段后透明度、可控性、可靠性都提升——错在哪一步可以单独定位、单独优化。

文档问答里典型的两步链长什么样?

第一步:让模型从 `####{{document}}####` 里抽出与问题相关的引文,输出包在 `<quotes></quotes>` 里,找不到就回 `No relevant quotes found!`;第二步:把第一步抽到的引文 + 原文档作为输入,让模型基于引文写最终答案。教程用 `gpt-4-1106-preview` 跑,长上下文场景也能换 Claude。

Prompt Chaining 最大的好处是什么?

性能之外的三个工程价值:透明度(能看到每一步中间结果)、可控性(每步可独立改 prompt)、可靠性(错在哪一步立刻定位)。对话助手和个性化 LLM 应用尤其受益——一个 chain 跑完用户能看到「检索→筛选→回答」全过程,比黑盒更可信。

怎么把第一步的输出干净地传给第二步?

用结构化标签包起来,例如第一步输出 `<quotes>...</quotes>`,第二步 prompt 里直接引用这段标签内容并配 `####原文档####` 双分隔符。教程示例第二步指令:`Using the relevant quotes (delimited by <quotes></quotes>) and the original document (delimited by ####), write an answer`——分隔符各司其职,模型不会混淆数据来源。

Prompt Chaining 跟 CoT 是同一回事吗?

不是。CoT 是单个 prompt 内让模型自己生成推理链,所有步骤在一次推理里完成;Prompt chaining 是多个独立 prompt 调用,每次的输出经过外部代码处理(清洗引文、移除引用标志 `[27]`)后再喂下一步。Chain 给你工程介入点,CoT 不给。